ASUS Unveils Game-Changing Liquid-Cooled AI Infrastructure Powered by NVIDIA Vera Rubin Platform

ASUS unveiled its fully liquid-cooled AI infrastructure at NVIDIA GTC 2026 (Booth# 421), delivering

a comprehensive, end-to-end solution powered by the NVIDIA Vera Rubin platform. Under the theme Trusted AI, Total Flexibility, this customizable framework — from rack-scale AI

Factories, desktop AI supercomputing, Edge AI to Enterprise AI solutions —

enables enterprises and cloud providers to build high-performance,

energy-efficient large-scale AI clusters with unmatched efficiency and

dramatically reduced PUE and TCO.

As

a provider of NVIDIA GB300 NVL72 and NVIDIA HGX B300 systems, the flagship ASUS

offering is the ASUS AI POD built on

the NVIDIA Vera Rubin platform —

a liquid-cooled, rack-scale powerhouse designed for massive AI workloads.

Through strategic partnerships with leading cooling and component providers,

ASUS offers diverse cooling modalities, tailored thermal solutions, and

redundancy to meet any enterprise requirement. Proven by global client

successes, ASUS provides expert consultation, a broad portfolio of AI and

storage solutions, seamless infrastructure deployment, application integration,

and ongoing services — combining scalability and sustainability to drive

business value and intelligence.

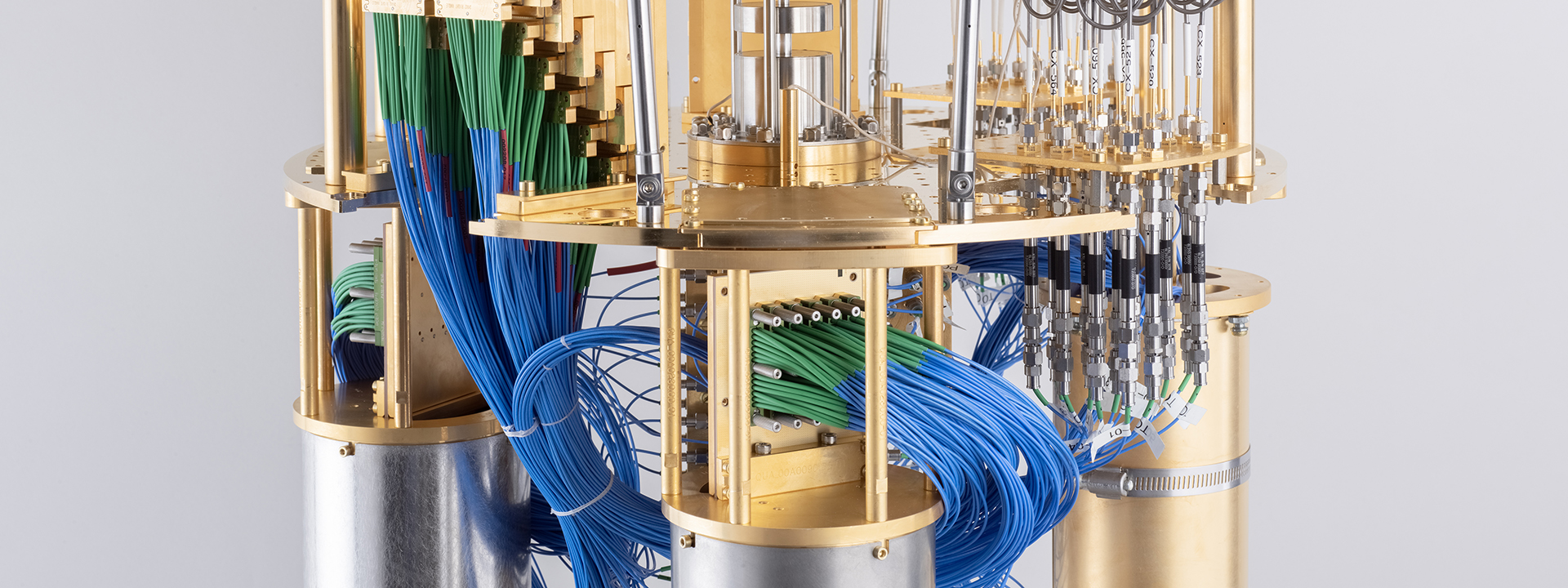

At

the forefront is the flagship XA VR721-E3 built on

NVIDIA Vera Rubin NVL72, a 100% liquid-cooled rack-scale system. This offers a

TDP of up to 227kW (MaxP) or 187kW (MaxQ), delivers up to 10X higher

performance per watt, and is purpose-built for trillion-parameter models and

delivering massive AI performance for large-scale AI factories. Partnering with

Vertiv, a global leader in critical digital infrastructure, Schneider Electric

and other leading providers, ASUS delivers a full-stack power and cooling infrastructure designed

for zero-throttle performance from standard deployments to advanced liquid

cooling, ensuring redundancy for each specific needs.

Addressing

rigorous data-centre demands, ASUS also introduces its latest server series

built on NVIDIA HGX Rubin NVL8 systems, featuring eight NVIDIA Rubin GPUs

connected via sixth-generation NVIDIA NVLink with integrated 800G bandwidth per

GPU. To facilitate a seamless and cost-effective transition to liquid cooling,

ASUS offers two distinct solutions: the XA NR1I-E12L, an

innovative hybrid-cooled option; and the XA NR1I-E12LR, a 100%

liquid-cooled system. The hybrid-cooled XA NR1I-E12L specifically combines

direct-to-chip (D2C) liquid cooling for the NVIDIA HGX Rubin NVL8 baseboard with air

cooling for the dual Intel® Xeon® 6 processors.

The portfolio is

further strengthened by high-performance scalable servers like the XA NB3I-E12 built on NVIDIA HGX B300 systems to ensure

a solution for every demanding AI workload, the ESC8000A-E13X based

on NVIDIA MGX integrated with NVIDIA ConnectX-8 SuperNICs for extreme GPU to

GPU connectivity and ESC8000A-E13P accelerated

by NVIDIA RTX PRO 4500 Blackwell

Server Edition or NVIDIA RTX PRO 6000 Blackwell Server

Edition GPUs, delivering breakthrough performance for demanding data

processing, AI, video, and visual computing workloads in a power efficient

design.

The tangible

impact of the complete ASUS AI Factory concept is already

demonstrated through several successful customer deployments,

where the ASUS ESC8000 series powered

a production-line digital twin built on  NVIDIA Omniverse libraries and integrated

with NVIDIA's customizable multi-camera tracking workflow,

enabling remote simulation and significantly reducing deployment risks,

managing the entire process for seamless, low-disruption deployment and maximising

value from day one.

To support these

powerful systems and democratize AI development, ASUS has also established a

robust data ecosystem by partnering with NVIDIA-Certified storage

providers — including IBM, DDN, WEKA and VAST Data — to deliver scalable,

resilient solutions for memory-intensive AI. A full spectrum of storage solutions across block storage-VS320D-RS12, JBOD-VS320D-RS12J, object storage-OJ340A-RS60,

and software-defined systems —

ensuring flexibility from edge to cloud, and from enterprise applications to AI

and HPC workloads.

Leave A Comment